Church of Saint James

SIGGRAPH 2026

TL;DR: We introduce

Raster2Seq,

a framework reformulating

Raster2Vector conversion as

next-corner prediction, handling floorplans of arbitrary length.

Reconstructing a structured vector-graphics representation from a rasterized floorplan image is typically an important prerequisite for computational tasks involving floorplans such as automated understanding or CAD workflows. However, existing techniques struggle in faithfully generating the structure and semantics conveyed by complex floorplans that depict large indoor spaces with many rooms and a varying numbers of polygon corners. To this end, we propose Raster2Seq, framing floorplan reconstruction as a sequence-to-sequence task in which floorplan elements—such as rooms, windows, and doors—are represented as labeled polygon sequences that jointly encode geometry and semantics. Our approach introduces an autoregressive decoder that learns to predict the next corner conditioned on image features and previously generated corners using guidance from learnable anchors. These anchors represent spatial coordinates in image space, hence allowing for effectively directing the attention mechanism to focus on informative image regions. By embracing the autoregressive mechanism, our method offers flexibility in the output format, enabling for efficiently handling complex floorplans with numerous rooms and diverse polygon structures. Our method achieves state-of-the-art performance on standard benchmarks such as Structure3D, CubiCasa5K, and Raster2Graph, while also demonstrating strong generalization to more challenging datasets like WAFFLE, which contain diverse room structures and complex geometric variations.

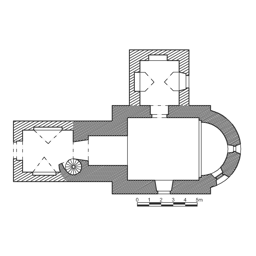

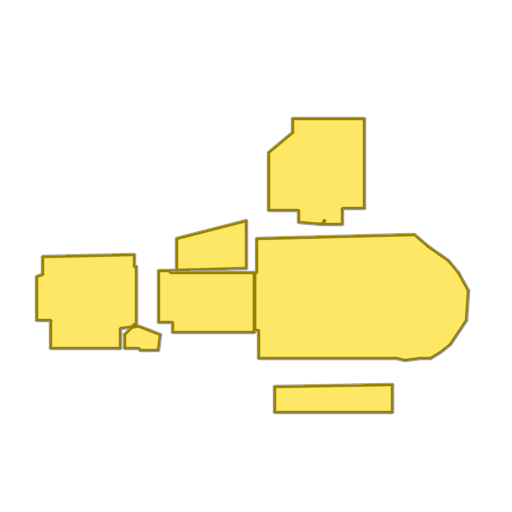

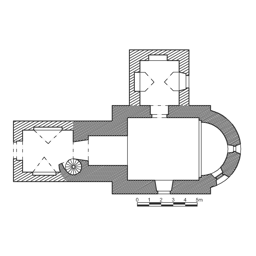

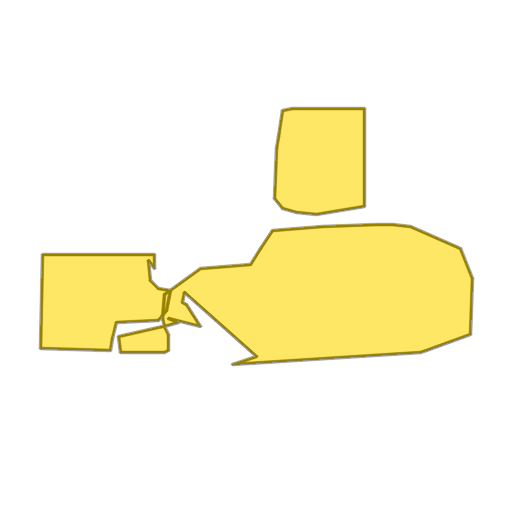

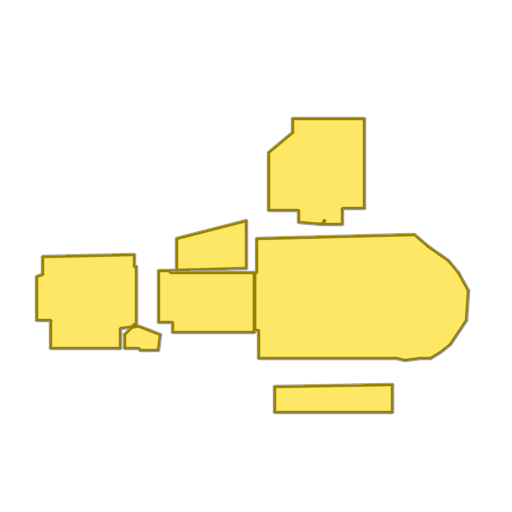

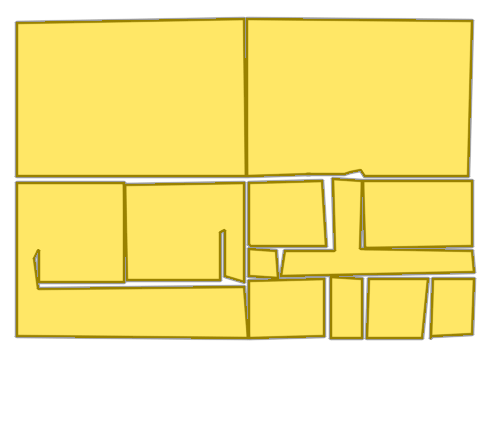

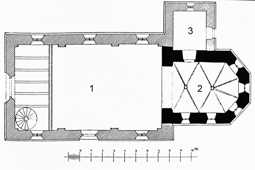

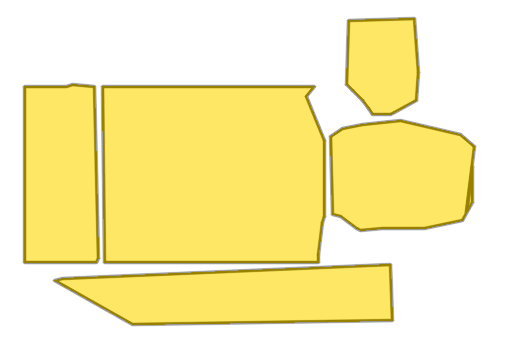

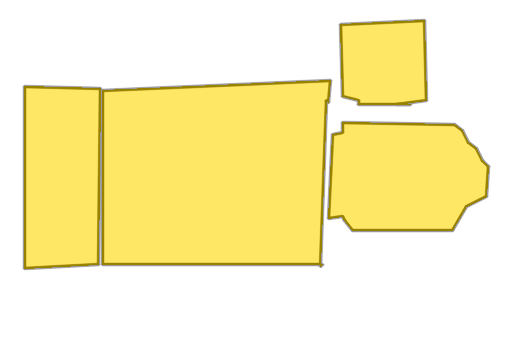

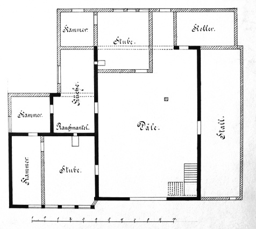

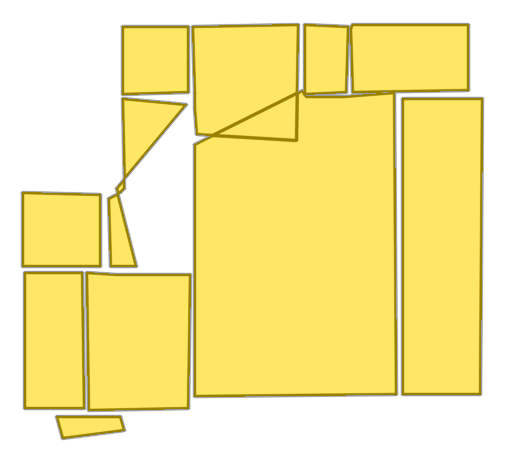

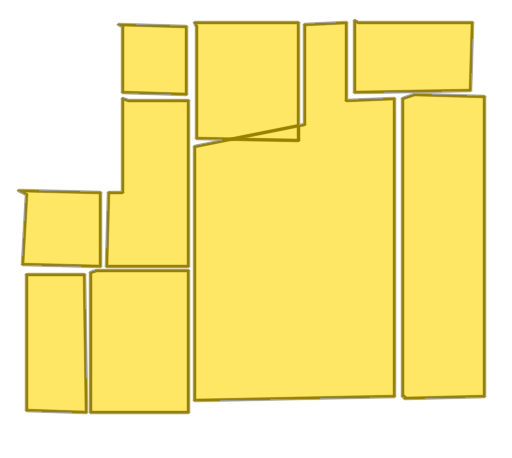

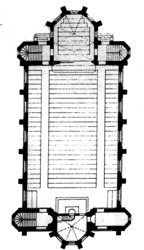

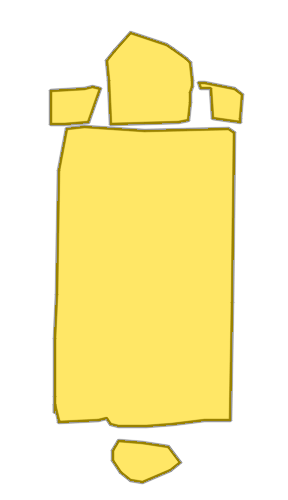

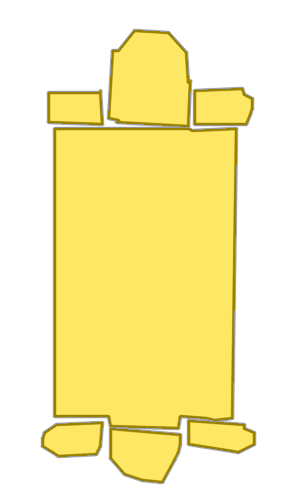

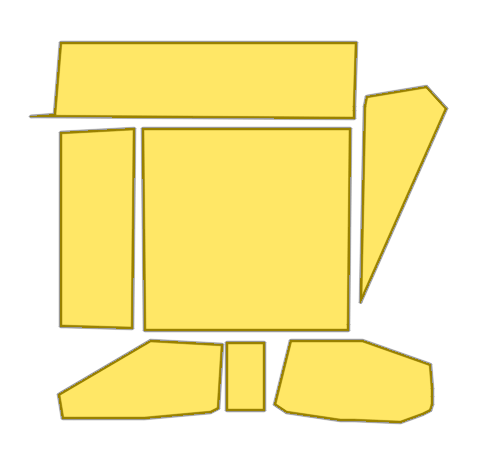

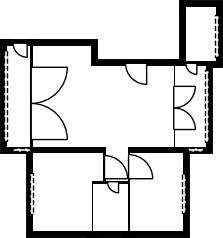

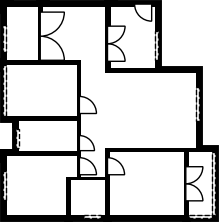

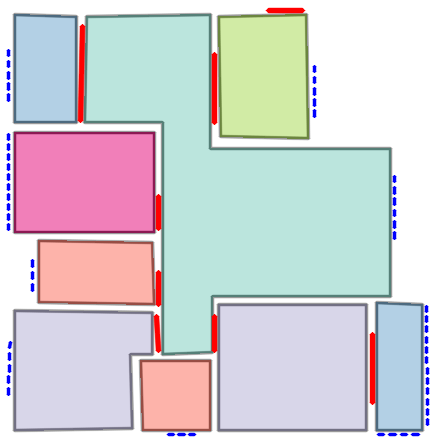

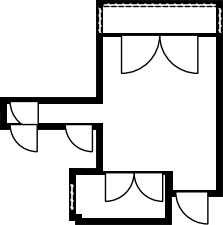

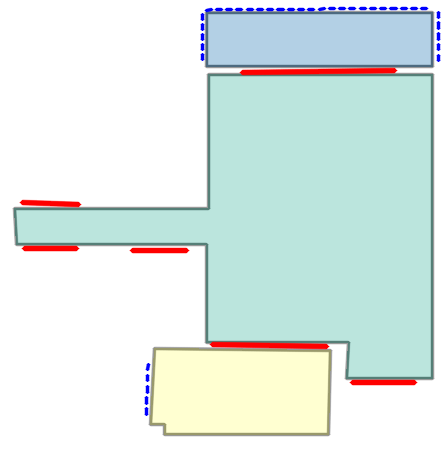

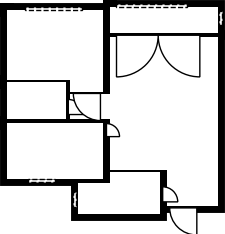

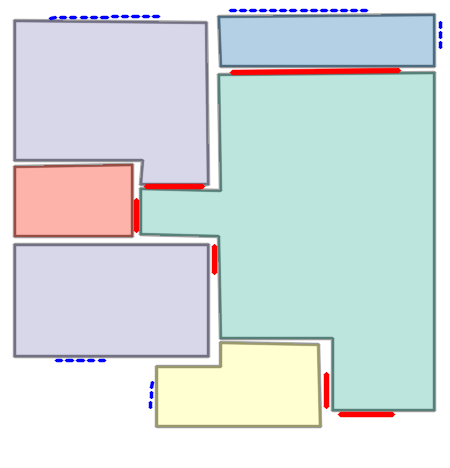

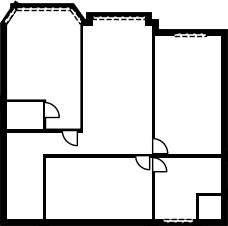

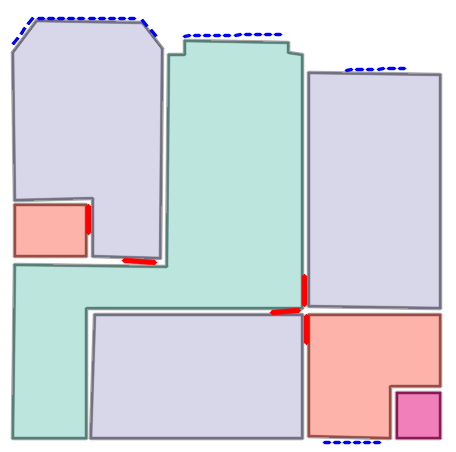

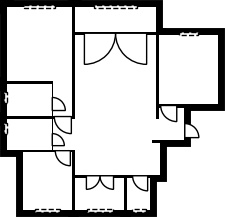

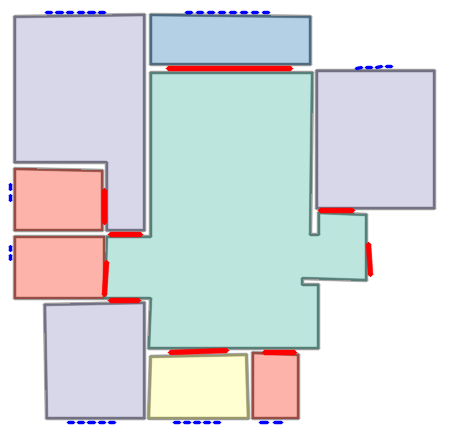

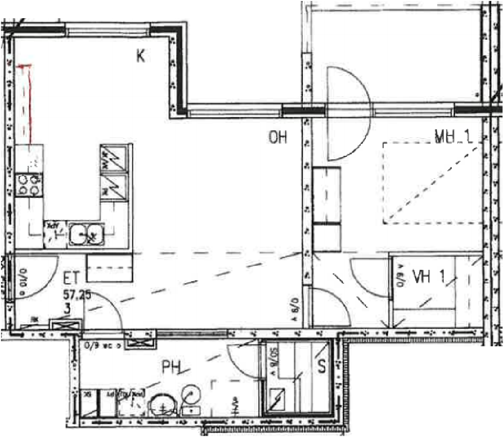

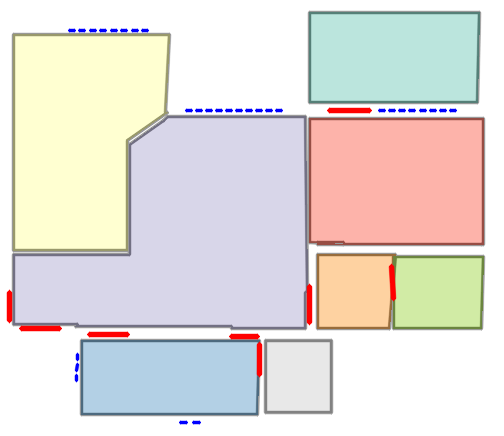

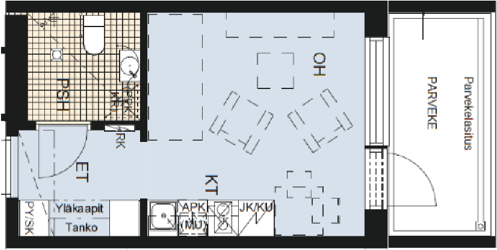

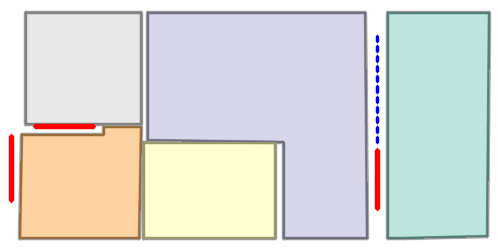

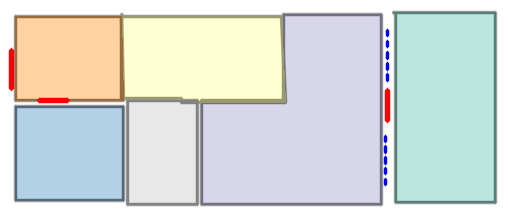

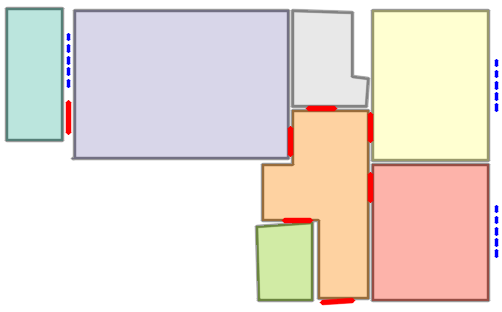

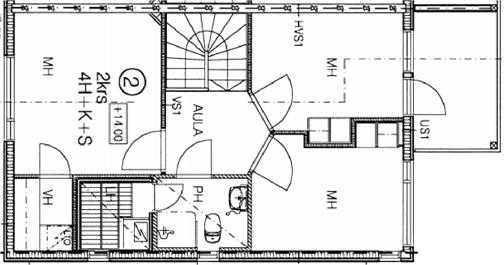

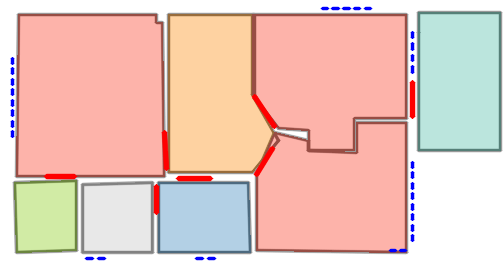

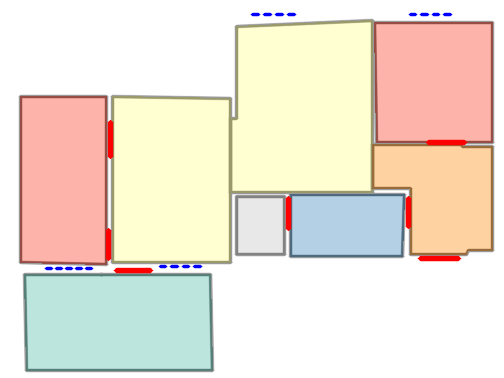

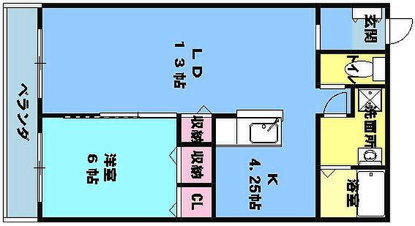

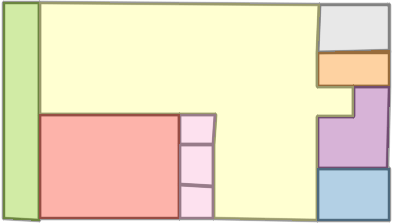

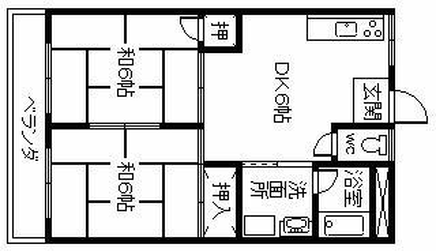

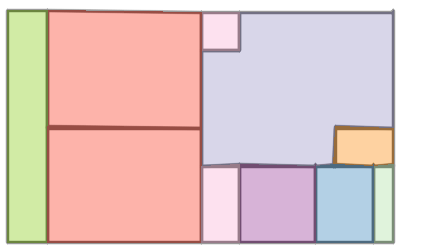

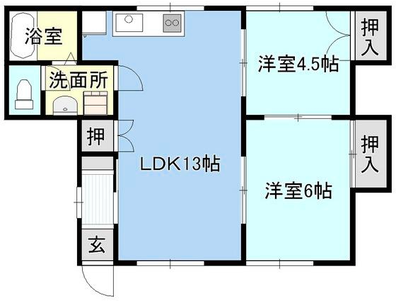

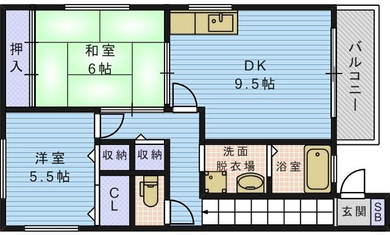

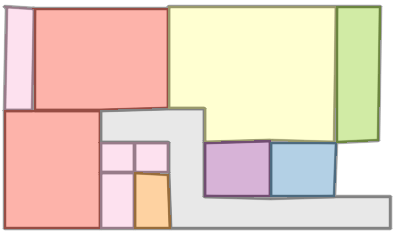

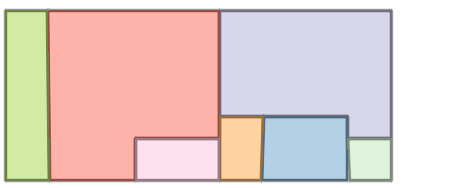

Qualitative comparison with RoomFormer on unseen WAFFLE floorplan images; both models are trained on CubiCasa5K. As illustrated below, our model exhibits stronger generalization capabilities over the structures of real-world Internet data.

Church of Saint James

Teltow Canal Power Station

Church of Saint Nicholas

Imkerhaus

Palais du Louvre

Palmer Mansion

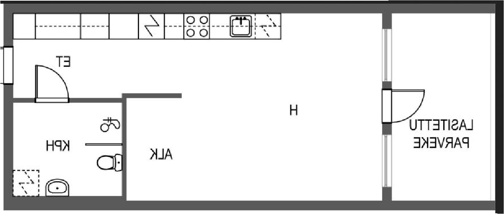

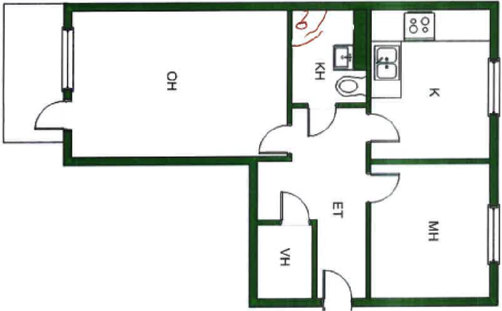

Each example shows a pair of images: the input image and the output

reconstruction generated by

Raster2Seq. Use the tabs to browse

qualitative results across Structured3D-B, CubiCasa5K, and

Raster2Graph dataset.

*Structured3D-B denotes our binary raster version of

Structured3D, constructed from ground-truth annotations to

resemble standard floorplan drawings rather than the density-map

inputs used in the original dataset.

Quantitative comparison on Structured3D, CubiCasa5K, and Raster2Graph datasets, evaluating F1 scores across geometric predictions (Room, Corner, Angle) and semantic predictions (Room Semantic, Window & Door).

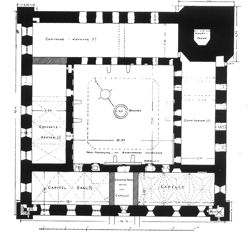

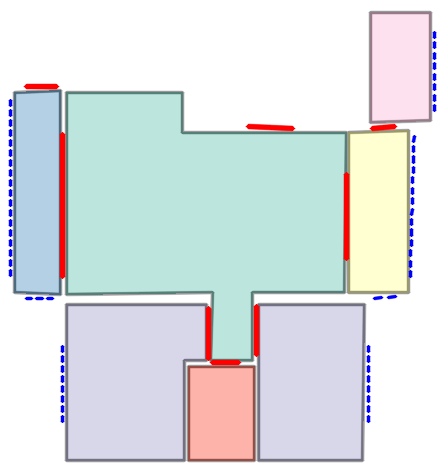

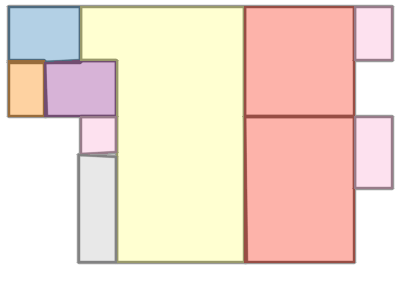

We perform a cross-evaluation experiment across different train-test dataset configurations. We evaluate performance using metrics reported previously, using RoomF1 for the CubiCasa5K and Raster2Graph datasets and IoU for WAFFLE. Cross-evaluation heatmaps show performance across evaluation datasets (rows) and training datasets (columns), with hotter colors denoting higher performance.

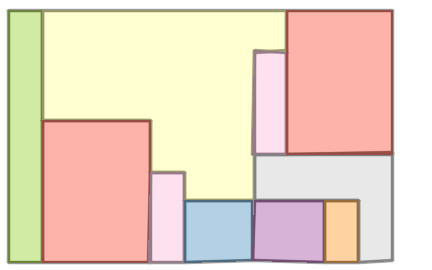

Performance vs. floorplan complexity—as approximated by the total number of polygons (left) and the total number of corners (right). As illustrated above over Structured3D-B (top) and CubiCasa5K (bottom), our approach yields larger gains as the floorplan complexity increases.

Reconstructing a structured vector-graphics representation from a rasterized floorplan image is a fundamental prerequisite for computational tasks involving floorplans such as automated understanding or CAD workflows. However, existing techniques struggle to faithfully generate the structure and semantics conveyed by complex floorplans that depict large indoor spaces with many rooms and varying numbers of polygon corners. One popular paradigm is to simultaneously predict all structural floorplan elements, as in RoomFormer and FRI-Net. While these models perform similarly on simpler cases, RoomFormer and FRI-Net exhibit a notable performance drop in complex scenes with more than 15 polygons or 150 corners. As shown in Figure above, our method remains more robust as floorplan complexity increases. Particularly, RoomFormer relies on a fixed number of room queries (e.g., 2800); exceeding this capacity can trigger out-of-memory errors and increase computation due to quadratic attention costs. By contrast, our method formulates floorplan conversion as a sequence-to-sequence task, generating polygon coordinates autoregressively. This naturally handles variable-length polygons while allowing us to decompose floorplan reconstruction into interpretable, sequential predictions mirroring the natural CAD design workflow.

📜 Labeled corner sequence representation. Each polygon is represented as a sequence of labeled corners — spatial coordinates paired with semantic labels (rooms, windows, doors) — and polygons are sorted left-to-right across the floorplan. This representation naturally accommodates inputs and outputs of variable lengths.

🔗 Anchor-based autoregressive decoder. The core of our framework predicts the next labeled corner by fusing image features and previously generated corners, guided by learnable anchors that steer attention toward informative image regions for efficient handling of complex floorplans.

🏷️ Token-level semantic supervision. A per-corner semantic classification loss applied to individual corner embeddings preserves semantic fidelity throughout autoregressive generation.

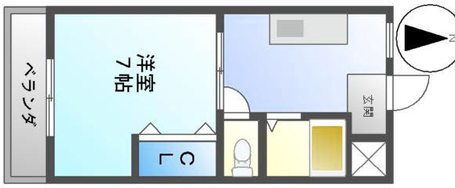

Given a rasterized floorplan image (left), Raster2Seq converts it into a vectorized representation as a labeled polygon sequence, with polygons delimited by special <SEP> tokens. The core component is an anchor-based autoregressive decoder that predicts the next token from image features (\(f_\text{img}\)), learnable anchors (\(v_\text{anc}\)), and previously generated tokens. Above, we visualize the first two predicted labeled polygons (in orange and pink, respectively).

While our method outperforms existing works across various metrics, it does not directly enforce geometric constraints, which can cause predicted outputs to exhibit artifacts on noisy datasets such as CubiCasa5K (see results below). To address this, we introduce a VLM-based vectorization refinement procedure that naturally builds on our polygon sequence representation and further improves reconstruction accuracy, highlighting the flexibility of our representation for integrating higher-level reasoning modules.

Given an input JSON specifying the vectorized floorplan predicted by our method, a VLM refines it using the rasterized floorplan, the vectorized overlay, the vectorized floorplan alone, and the adjacency graph as additional inputs. Users can specify geometric constraints in the refinement prompt ; the VLM then outputs the refined JSON.

Floorplan vectorization unlocks a range of computational tasks that are difficult or impossible in pixel space. We demonstrate this for controllable 3D scene generation: the vectorized floorplan is extruded into a coarse 3D volume, which guides TRELLIS--3D Generative Backbone--using a test-time approach, SpaceControl. The results show that floorplan vectorization provides a strong geometric prior, allowing the 3D generative model to faithfully reproduce complex architectural layouts from a single input RGB image, while maintaining global structural consistency.

@inproceedings{phung2026raster2seq,

title = {Raster2Seq: Polygon Sequence Generation for

Floorplan Reconstruction},

author = {Phung, Hao and Averbuch-Elor, Hadar},

booktitle={Special Interest Group on Computer Graphics and

Interactive Techniques Conference Conference Papers},

year = {2026},

}